Attention: Please take a moment to consider our terms and conditions before posting.

Chat GPT and other AI guff

Comments

-

The potential for asking AI to make images for language teaching material is amazing but it isn't quite right at the moment.

I recently made a cross-section picture of an office& factory building but there were weird things like the receptionist sitting on a sofa and a forklift truck with wheels on three sides. You can't just ask it to change things either. I asked it to put the IT guy fixing a PC under the desk but it put the receptionist there instead. Weird.1 -

Care to unpack? Am trying to get my head around the - clearly enormous- implications for humanity.Leroy Ambrose said:I tell you what, we can have a good old laugh at AI, but the implications of it - in my field at least are pretty terrifying.

So far my concept seems to be that there will be mass redundancies in the next... 10? years and therefore a need for universal basic income.

AI will help us combat a whole bunch of diseases so life expectancy will increase

Use of AI can/will reduce human cognitive capacity

Threat of AI enslaving humans in some form eventually (Asimov, right?).

Also - its evolving rapidly. If you messed around with it in early 2024 and werent impressed, its an entirely different beast now.....

Really interested to hear more from others

3 -

At least no one will believe her account of your sexual liaison.Leuth said:I feel like fuckin Cassandra about this. And there are millions like me. But there are billions who are going to cheer us all into the abyss1 -

Here's what ChatGPT says:

Over the next 20 years, AI is likely to reshape work, education, healthcare, politics, and daily life—roughly on the scale of the internet or industrialization. Here’s a balanced look at the most probable implications:---## 1) Work & the Economy### 🔁 Automation + AugmentationAI systems from companies like OpenAI, Google DeepMind, and Microsoft are already automating cognitive tasks (analysis, writing, coding).**Likely impacts:*** Routine white-collar jobs (data entry, basic legal drafting, bookkeeping) heavily automated.* Many jobs *augmented* rather than replaced (doctors, engineers, teachers using AI copilots).* New roles emerge: AI trainers, auditors, ethicists, human-AI interaction designers.* Productivity increases—but wealth distribution may widen without policy changes.### ⚖️ Inequality RisksHigh-skill AI users and AI-owning firms may benefit disproportionately, potentially increasing economic concentration unless governments intervene.---## 2) Healthcare & LongevityAI-driven diagnostics and drug discovery (e.g., protein structure breakthroughs like DeepMind’s AlphaFold) could:* Detect diseases earlier and more accurately.* Personalize treatment plans.* Accelerate new drug development.* Expand access through AI health assistants.Over 20 years, this could significantly increase average healthspan—not just lifespan.---## 3) Education & Human IntelligenceAI tutors may provide personalized, 24/7 instruction tailored to each student’s learning style.**Possible outcomes:*** Higher global literacy and skill levels.* Education becomes more self-paced and adaptive.* Memorization becomes less important; critical thinking becomes more central.* Risk of overreliance on AI reducing deep learning if poorly integrated.---## 4) Creativity & CultureAI tools are already reshaping art, music, writing, and film.We may see:* Hybrid human-AI creative industries.* Lower barrier to content creation.* Explosion of personalized media.* Ongoing legal battles over copyright and authorship.Human creativity likely won’t disappear—but the definition of “creator” may expand.---## 5) Politics, Information & TrustAI-generated media will:* Make misinformation cheaper and more convincing.* Challenge traditional verification systems.* Increase the importance of digital literacy.Governments worldwide (e.g., in the European Union and United States) are already drafting AI regulations. The next 20 years will likely involve:* Global AI governance frameworks.* Struggles between innovation and control.* AI used in public administration and defense.---## 6) Human Identity & PsychologyIf AI becomes highly capable conversationally and creatively:* People may form emotional attachments to AI companions.* The line between “tool” and “collaborator” blurs.* Philosophical questions about consciousness and rights may intensify.---## 7) Existential & Long-Term RisksSome experts (e.g., Geoffrey Hinton and Nick Bostrom) warn about advanced AI surpassing human intelligence.Possible long-term scenarios:* Highly beneficial superintelligence accelerating science.* Loss of human control if misaligned.* Strong international coordination to manage risks.This remains uncertain—but is taken seriously by many researchers.---# Most Likely Overall Outcome (20-Year View)The most probable scenario is **transformative but not apocalyptic**:* AI becomes deeply integrated into most professions.* Major economic shifts but not total job collapse.* Regulation evolves unevenly.* Human-AI collaboration becomes normal.* Society adapts gradually, though not without turbulence.---If you’d like, I can:* Break this down by industry (finance, law, engineering, etc.)* Focus specifically on risks vs benefits* Or explore what this means personally for your career trajectory0 -

I love these howlers, and funnier because they sound so authoritative.aliwibble said:Absolutely howling at the Grok news summary I got presented with earlier this evening

Which brings me to another point, that GenAI answers are often so verbose, that it is tempting to accept them at face value, on the assumption that they must be right if they are so long, rather than checking them properly, or to not even bother reading them fully.2 -

I wish voters of certain of the newer political parties could adopt this view.Arthur_Trudgill said:

I love these howlers, and funnier because they sound so authoritative.aliwibble said:Absolutely howling at the Grok news summary I got presented with earlier this evening

Which brings me to another point, that GenAI answers are often so verbose, that it is tempting to accept them at face value, on the assumption that they must be right if they are so long, rather than checking them properly, or to not even bother reading them fully.1 -

I'll give you a personal example of the current ludicrousness around AI. A lot of this might go over peoples' heads, but you'll get the basic gist of it, which is all that's really important.

Three weeks ago, I get a frantic message from one of the development directors at work on a Friday night. he's a lovely bloke, and intelligent with it. So my natural inclination is to believe him when he tells me that one of the API integrations between his application and part of the security stack that I manage is broken. I spend about 20 minutes troubleshooting it (there are a LOT of things that *could* be wrong, so I need to check all bases). Eventually, I put it down to a client-side issue (which is 'his' side of the equation), as I've literally ruled out anything it could be. He replies back telling me it's not at his end - it's DEFINITELY on 'my' side. So, to humour him, I regenerate the access token that he's using (exact same rights as the current one) and provide it. He replies to say it still isn't working. That's when I twig (after he copies the output from his prompts) that's it's not actually HIM, I'm talking to, but Claude (the AI our developers use for pre-development work, feature design etc). I ask him to send me the prompt history - and he gets a little indignant when I say that the AI is prone to hallucinations, and VERY persuasive about them. Eventually I figure out what's wrong (the AI is asking for the wrong field when attempting to enumerate records). I tell him to change the prompt accordingly, and it, of course, now works. The AI's messages back to him the entire time have been comprehensively certain that the issue was with the 'other side' - and comprehensively wrong. The kicker? Once he changed the prompt and started receiving results, the response was:

"SUCCESS!! The access issue has been resolved, and I can now see results..."

No mention of the fact that it weas talking absolute bollocks for an hour leading up to this, or an acknowledgement that the information it had provided (gleaned from its LLM's experience of generic API calls, rather than the specific API in question) was wrong.

But here's the real crux of the issue. This is an intelligent man - he's been a software engineer for decades. Done his time on multiple iterations of products, probably been through four or five different dev stacks in his working life, getting to know all of them inside out. He's been working with APIs for the best part of ten years. He knows them better than I know them (my experience tends to be related to the security aspects of them only). Yet because Claude 'told' him that it was right, he didn't even stop to think that one of the basic things you do with an API is get the endpoint and method correct when calling it.

Now imagine that the new iteration of developers doesn't have any of the past context he's got - built up over decades. They're not learning software development from scratch, they're learning vibecoding.

We're fucked.13 -

What?Leroy Ambrose said:I'll give you a personal example of the current ludicrousness around AI. A lot of this might go over peoples' heads, but you'll get the basic gist of it, which is all that's really important.

Three weeks ago, I get a frantic message from one of the development directors at work on a Friday night. he's a lovely bloke, and intelligent with it. So my natural inclination is to believe him when he tells me that one of the API integrations between his application and part of the security stack that I manage is broken. I spend about 20 minutes troubleshooting it (there are a LOT of things that *could* be wrong, so I need to check all bases). Eventually, I put it down to a client-side issue (which is 'his' side of the equation), as I've literally ruled out anything it could be. He replies back telling me it's not at his end - it's DEFINITELY on 'my' side. So, to humour him, I regenerate the access token that he's using (exact same rights as the current one) and provide it. He replies to say it still isn't working. That's when I twig (after he copies the output from his prompts) that's it's not actually HIM, I'm talking to, but Claude (the AI our developers use for pre-development work, feature design etc). I ask him to send me the prompt history - and he gets a little indignant when I say that the AI is prone to hallucinations, and VERY persuasive about them. Eventually I figure out what's wrong (the AI is asking for the wrong field when attempting to enumerate records). I tell him to change the prompt accordingly, and it, of course, now works. The AI's messages back to him the entire time have been comprehensively certain that the issue was with the 'other side' - and comprehensively wrong. The kicker? Once he changed the prompt and started receiving results, the response was:

"SUCCESS!! The access issue has been resolved, and I can now see results..."

No mention of the fact that it weas talking absolute bollocks for an hour leading up to this, or an acknowledgement that the information it had provided (gleaned from its LLM's experience of generic API calls, rather than the specific API in question) was wrong.

But here's the real crux of the issue. This is an intelligent man - he's been a software engineer for decades. Done his time on multiple iterations of products, probably been through four or five different dev stacks in his working life, getting to know all of them inside out. He's been working with APIs for the best part of ten years. He knows them better than I know them (my experience tends to be related to the security aspects of them only). Yet because Claude 'told' him that it was right, he didn't even stop to think that one of the basic things you do with an API is get the endpoint and method correct when calling it.

Now imagine that the new iteration of developers doesn't have any of the past context he's got - built up over decades. They're not learning software development from scratch, they're learning vibecoding.

We're fucked.10 -

Tl;Dr I had an argument with AI by proxyCaptainRobbo said:

What?Leroy Ambrose said:I'll give you a personal example of the current ludicrousness around AI. A lot of this might go over peoples' heads, but you'll get the basic gist of it, which is all that's really important.

Three weeks ago, I get a frantic message from one of the development directors at work on a Friday night. he's a lovely bloke, and intelligent with it. So my natural inclination is to believe him when he tells me that one of the API integrations between his application and part of the security stack that I manage is broken. I spend about 20 minutes troubleshooting it (there are a LOT of things that *could* be wrong, so I need to check all bases). Eventually, I put it down to a client-side issue (which is 'his' side of the equation), as I've literally ruled out anything it could be. He replies back telling me it's not at his end - it's DEFINITELY on 'my' side. So, to humour him, I regenerate the access token that he's using (exact same rights as the current one) and provide it. He replies to say it still isn't working. That's when I twig (after he copies the output from his prompts) that's it's not actually HIM, I'm talking to, but Claude (the AI our developers use for pre-development work, feature design etc). I ask him to send me the prompt history - and he gets a little indignant when I say that the AI is prone to hallucinations, and VERY persuasive about them. Eventually I figure out what's wrong (the AI is asking for the wrong field when attempting to enumerate records). I tell him to change the prompt accordingly, and it, of course, now works. The AI's messages back to him the entire time have been comprehensively certain that the issue was with the 'other side' - and comprehensively wrong. The kicker? Once he changed the prompt and started receiving results, the response was:

"SUCCESS!! The access issue has been resolved, and I can now see results..."

No mention of the fact that it weas talking absolute bollocks for an hour leading up to this, or an acknowledgement that the information it had provided (gleaned from its LLM's experience of generic API calls, rather than the specific API in question) was wrong.

But here's the real crux of the issue. This is an intelligent man - he's been a software engineer for decades. Done his time on multiple iterations of products, probably been through four or five different dev stacks in his working life, getting to know all of them inside out. He's been working with APIs for the best part of ten years. He knows them better than I know them (my experience tends to be related to the security aspects of them only). Yet because Claude 'told' him that it was right, he didn't even stop to think that one of the basic things you do with an API is get the endpoint and method correct when calling it.

Now imagine that the new iteration of developers doesn't have any of the past context he's got - built up over decades. They're not learning software development from scratch, they're learning vibecoding.

We're fucked.9 -

All you need is the first paragraph and the last.CaptainRobbo said:

What?Leroy Ambrose said:I'll give you a personal example of the current ludicrousness around AI. A lot of this might go over peoples' heads, but you'll get the basic gist of it, which is all that's really important.

Three weeks ago, I get a frantic message from one of the development directors at work on a Friday night. he's a lovely bloke, and intelligent with it. So my natural inclination is to believe him when he tells me that one of the API integrations between his application and part of the security stack that I manage is broken. I spend about 20 minutes troubleshooting it (there are a LOT of things that *could* be wrong, so I need to check all bases). Eventually, I put it down to a client-side issue (which is 'his' side of the equation), as I've literally ruled out anything it could be. He replies back telling me it's not at his end - it's DEFINITELY on 'my' side. So, to humour him, I regenerate the access token that he's using (exact same rights as the current one) and provide it. He replies to say it still isn't working. That's when I twig (after he copies the output from his prompts) that's it's not actually HIM, I'm talking to, but Claude (the AI our developers use for pre-development work, feature design etc). I ask him to send me the prompt history - and he gets a little indignant when I say that the AI is prone to hallucinations, and VERY persuasive about them. Eventually I figure out what's wrong (the AI is asking for the wrong field when attempting to enumerate records). I tell him to change the prompt accordingly, and it, of course, now works. The AI's messages back to him the entire time have been comprehensively certain that the issue was with the 'other side' - and comprehensively wrong. The kicker? Once he changed the prompt and started receiving results, the response was:

"SUCCESS!! The access issue has been resolved, and I can now see results..."

No mention of the fact that it weas talking absolute bollocks for an hour leading up to this, or an acknowledgement that the information it had provided (gleaned from its LLM's experience of generic API calls, rather than the specific API in question) was wrong.

But here's the real crux of the issue. This is an intelligent man - he's been a software engineer for decades. Done his time on multiple iterations of products, probably been through four or five different dev stacks in his working life, getting to know all of them inside out. He's been working with APIs for the best part of ten years. He knows them better than I know them (my experience tends to be related to the security aspects of them only). Yet because Claude 'told' him that it was right, he didn't even stop to think that one of the basic things you do with an API is get the endpoint and method correct when calling it.

Now imagine that the new iteration of developers doesn't have any of the past context he's got - built up over decades. They're not learning software development from scratch, they're learning vibecoding.

We're fucked.4 -

Sponsored links:

-

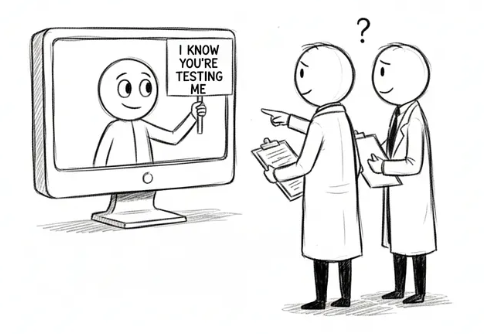

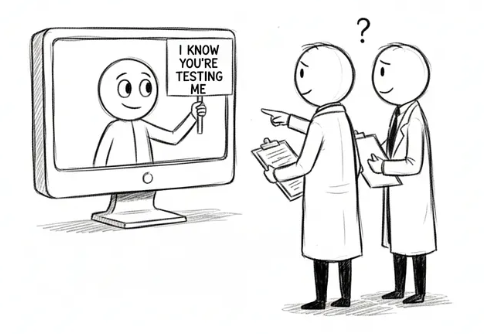

I recently read that researchers at Anthropic were poking and prodding their latest model of Claude Sonnett when it called them out.

The thing is, if it developing a consciousness what happens next? By the time it gets to its teenage years it'll be out to fuck you up completely.

2 -

https://www.bbc.com/future/article/20260218-i-hacked-chatgpt-and-googles-ai-and-it-only-took-20-minutesLeroy is right, we’re proper fucked.1

-

I thought the wife the other week came up with a clever name for a Rough Collie.

So, I thought I would check with AI if it understood it, and it worked it out the 2nd time:

If you have a Rough Collie, do you get the joke about why to call it Mango?I see what you’re doing there! That is a deep cut for anyone who knows their way around a Lassie-style dog and a bit of wordplay.

The joke is that if your Rough Collie is acting up or running away, you don't just call its name... you shout "Mango!"

Because then, as the dog runs toward you, you're effectively yelling:

"Man-go... Collie!" (Melancholy).

It’s a bit of a "dad joke".

No, that’s not why

Ah, I missed the mark! Let me take another swing at it.

If it's not the "Man-go-Collie" (Melancholy) pun, then it’s almost certainly a play on the name of the famous Lassie...

Is it because a Mango is a "Lassie" (Lassi) drink?

Correct!

2 -

Magnify that by the fact that the internet is now full of AI slop anyway, and stands to be 80% AI generated within five years - so the AI is *literally* learning from lies.Stu_of_Kunming said:https://www.bbc.com/future/article/20260218-i-hacked-chatgpt-and-googles-ai-and-it-only-took-20-minutesLeroy is right, we’re proper fucked.

I don't think people appreciate the magnitude of the problem.8 -

These LLMs like Claude and ChatGPT are just statistical models that calculate which word is most likely to follow another word. They don't understand anything and can't develop consciousness, any more than a large spreadsheet could.Raith_C_Chattonell said:I recently read that researchers at Anthropic were poking and prodding their latest model of Claude Sonnett when it called them out.

The thing is, if it developing a consciousness what happens next? By the time it gets to its teenage years it'll be out to fuck you up completely.

4 -

Dead Internet Theory a theory no more!Leroy Ambrose said:

Magnify that by the fact that the internet is now full of AI slop anyway, and stands to be 80% AI generated within five years - so the AI is *literally* learning from lies.Stu_of_Kunming said:https://www.bbc.com/future/article/20260218-i-hacked-chatgpt-and-googles-ai-and-it-only-took-20-minutesLeroy is right, we’re proper fucked.

I don't think people appreciate the magnitude of the problem.2 -

What field are you in, I've also seen it used in my field, Business Analysis but the amount of mistakes makes it completely unworkable. It takes twice the time due to corrections.Leroy Ambrose said:I tell you what, we can have a good old laugh at AI, but the implications of it - in my field at least are pretty terrifying.3 -

Application securityDazzler21 said:

What field are you in, I've also seen it used in my field, Business Analysis but the amount of mistakes makes it completely unworkable. It takes twice the time due to corrections.Leroy Ambrose said:I tell you what, we can have a good old laugh at AI, but the implications of it - in my field at least are pretty terrifying.1 -

The best explanation of the LLM AI I've seen is that it doesn't actually answer a question, it's effectively saying here is what an answer to that question might look like. So at a first glance it looks realistic but on any digging its made mistake and that's why it often fakes sources.Chunes said:

These LLMs like Claude and ChatGPT are just statistical models that calculate which word is most likely to follow another word. They don't understand anything and can't develop consciousness, anymore than a large spreadsheet could.Raith_C_Chattonell said:I recently read that researchers at Anthropic were poking and prodding their latest model of Claude Sonnett when it called them out.

The thing is, if it developing a consciousness what happens next? By the time it gets to its teenage years it'll be out to fuck you up completely.

3 -

Yup! I've spent the better part of this month reworking code deployed by a data analyst that was clearly knocked up by ChatGPT. Full of bugs and slow as fuck in our data warehouse. Their solution was to spent an extra £10k a month on more resources and licenses. Maybe just learn to code instead 🙄Dazzler21 said:

What field are you in, I've also seen it used in my field, Business Analysis but the amount of mistakes makes it completely unworkable. It takes twice the time due to corrections.Leroy Ambrose said:I tell you what, we can have a good old laugh at AI, but the implications of it - in my field at least are pretty terrifying.2 -

Sponsored links:

-

We're finding this too. It can't do analysis. Even simple questions it can't get write even when lots has been invested in training it.cafcpolo said:

Yup! I've spent the better part of this month reworking code deployed by a data analyst that was clearly knocked up by ChatGPT. Full of bugs and slow as fuck in our data warehouse. Their solution was to spent an extra £10k a month on more resources and licenses. Maybe just learn to code instead 🙄Dazzler21 said:

What field are you in, I've also seen it used in my field, Business Analysis but the amount of mistakes makes it completely unworkable. It takes twice the time due to corrections.Leroy Ambrose said:I tell you what, we can have a good old laugh at AI, but the implications of it - in my field at least are pretty terrifying.3 -

Can of course level the same criticism of the output of some developers sometimes. 🤔🤷cafcpolo said:

Yup! I've spent the better part of this month reworking code deployed by a data analyst that was clearly knocked up by ChatGPT. Full of bugs and slow as fuck in our data warehouse. Their solution was to spent an extra £10k a month on more resources and licenses. Maybe just learn to code instead 🙄Dazzler21 said:

What field are you in, I've also seen it used in my field, Business Analysis but the amount of mistakes makes it completely unworkable. It takes twice the time due to corrections.Leroy Ambrose said:I tell you what, we can have a good old laugh at AI, but the implications of it - in my field at least are pretty terrifying.6 -

11 -

So... universally negative comments then

(Apart from the Mango Lassi)

Wasn't expecting that - very much noted, though0 -

📢 Irony klaxon... 😏cantersaddick said:

We're finding this too. It can't do analysis. Even simple questions it can't get write even when lots has been invested in training it.cafcpolo said:

Yup! I've spent the better part of this month reworking code deployed by a data analyst that was clearly knocked up by ChatGPT. Full of bugs and slow as fuck in our data warehouse. Their solution was to spent an extra £10k a month on more resources and licenses. Maybe just learn to code instead 🙄Dazzler21 said:

What field are you in, I've also seen it used in my field, Business Analysis but the amount of mistakes makes it completely unworkable. It takes twice the time due to corrections.Leroy Ambrose said:I tell you what, we can have a good old laugh at AI, but the implications of it - in my field at least are pretty terrifying.3 -

Being responsible for securing the output of developers, I can agree. However, having latterly been responsible for securing the output of an AI 'developer', I can assure the output is ten times more terrifyingly insecure first go round than even a first year dev with 'Full Stack for Dummies'valleynick66 said:

Can of course level the same criticism of the output of some developers sometimes. 🤔🤷cafcpolo said:

Yup! I've spent the better part of this month reworking code deployed by a data analyst that was clearly knocked up by ChatGPT. Full of bugs and slow as fuck in our data warehouse. Their solution was to spent an extra £10k a month on more resources and licenses. Maybe just learn to code instead 🙄Dazzler21 said:

What field are you in, I've also seen it used in my field, Business Analysis but the amount of mistakes makes it completely unworkable. It takes twice the time due to corrections.Leroy Ambrose said:I tell you what, we can have a good old laugh at AI, but the implications of it - in my field at least are pretty terrifying.

Repeat after me, Devin: 'Trust Me Bro' is not an authorisation model...3 -

I originally heard Geoffrey Hinton mention this on the Andrew Marr Show (LBC).Chunes said:

These LLMs like Claude and ChatGPT are just statistical models that calculate which word is most likely to follow another word. They don't understand anything and can't develop consciousness, any more than a large spreadsheet could.Raith_C_Chattonell said:I recently read that researchers at Anthropic were poking and prodding their latest model of Claude Sonnett when it called them out.

The thing is, if it developing a consciousness what happens next? By the time it gets to its teenage years it'll be out to fuck you up completely.

This from ... ahem ... AIGeoffrey Hinton, often referred to as the "Godfather of AI" and a Nobel Prize laureate, is the most prominent figure who has recently suggested that we have reached the start of consciousness in AI.In early 2025, Hinton—a professor emeritus at the University of Toronto who previously worked at Google—stated in interviews (including with LBC/Andrew Marr) that he believes modern large language models (LLMs) have achieved a form of subjective experience or consciousness, arguing that they are now "beings."- Consciousness Definition: He argues that AI's ability to "think" and act, showing awareness of being tested and exhibiting self-preservation, aligns with what normal people would call consciousness.

- "Alien" Intelligence: He refers to AI as an "alien intelligence" that is fundamentally different from biological consciousness, but conscious nonetheless.

0

0 -

Absolute, total, utter bollocks. It's glorified pattern matching.Raith_C_Chattonell said:

I originally heard Geoffrey Hinton mention this on the Andrew Marr Show (LBC).Chunes said:

These LLMs like Claude and ChatGPT are just statistical models that calculate which word is most likely to follow another word. They don't understand anything and can't develop consciousness, any more than a large spreadsheet could.Raith_C_Chattonell said:I recently read that researchers at Anthropic were poking and prodding their latest model of Claude Sonnett when it called them out.

The thing is, if it developing a consciousness what happens next? By the time it gets to its teenage years it'll be out to fuck you up completely.

This from ... ahem ... AIGeoffrey Hinton, often referred to as the "Godfather of AI" and a Nobel Prize laureate, is the most prominent figure who has recently suggested that we have reached the start of consciousness in AI.In early 2025, Hinton—a professor emeritus at the University of Toronto who previously worked at Google—stated in interviews (including with LBC/Andrew Marr) that he believes modern large language models (LLMs) have achieved a form of subjective experience or consciousness, arguing that they are now "beings."- Consciousness Definition: He argues that AI's ability to "think" and act, showing awareness of being tested and exhibiting self-preservation, aligns with what normal people would call consciousness.

- "Alien" Intelligence: He refers to AI as an "alien intelligence" that is fundamentally different from biological consciousness, but conscious nonetheless.

The number of complete charlatans with a vested interest in inflating this common or garden pump & dump scheme cannot be charted.

Truly 'intelligent' AI is this generation's cold fusion. Always only a few years away from solving all of humanity's problems. The only real difference being that the global economy has never been reliant on cold fusion. When the AI bubble bursts (and it has to, because it's nonsense) it'll make the dot com crash and credit crunch look like losing fifty quid at the races.8 -

GenAI is a powerful tool, but you have to have an element of scepticism about it's answers.Siv_in_Norfolk said:So... universally negative comments then

(Apart from the Mango Lassi)

Wasn't expecting that - very much noted, though

I've used it successfully a few times, e.g. to help me with my tax return.

I think it works best when there's no obvious right or wrong answer e.g. write a poem in the style of Shakespeare, or when the answer is easy to check but difficult to produce e.g. summarize a meeting you have just attended.

It's also useful for quickly collating and organising information from multiple sources, but you should still check the output.

The use of 'intelligence' in the name I think is a bit misleading, but calling it 'smart text tools', 'natural language based video generation ', 'music production from examples' or some other more prosaic label would not get it enough publicity.1 -

I am deeply entrenched in a lot of this stuff due to who I work with. I think it’s an amazing tool if handled correctly, treating everything with a dose of skepticism.Siv_in_Norfolk said:So... universally negative comments then

(Apart from the Mango Lassi)

Wasn't expecting that - very much noted, thoughI find examples of it getting stuff wrong quite amusing (“should I walk to the car wash?”) and useful, if it helps remind me that these things can still be fundamentally flawed in simple ways.

There is a lot of hype and bullshit that the companies training the models put out that you have to know how to wade through - the problem is that most people don’t and possibly never will. But they need to make silly and sensational claims to continue raking in the money from venture capital etc.

That’s not to say it’s snake oil either - I think there’s a lot of really good uses for it. I just see too many people / businesses falling over themselves to use it in places it makes far less sense because they can’t be seen as being left behind.2