Attention: Please take a moment to consider our terms and conditions before posting.

Chat GPT and other AI guff

Comments

-

In my defence I've been ill and was in bed half asleep.Leroy Ambrose said:

📢 Irony klaxon... 😏cantersaddick said:

We're finding this too. It can't do analysis. Even simple questions it can't get write even when lots has been invested in training it.cafcpolo said:

Yup! I've spent the better part of this month reworking code deployed by a data analyst that was clearly knocked up by ChatGPT. Full of bugs and slow as fuck in our data warehouse. Their solution was to spent an extra £10k a month on more resources and licenses. Maybe just learn to code instead 🙄Dazzler21 said:

What field are you in, I've also seen it used in my field, Business Analysis but the amount of mistakes makes it completely unworkable. It takes twice the time due to corrections.Leroy Ambrose said:I tell you what, we can have a good old laugh at AI, but the implications of it - in my field at least are pretty terrifying.1 -

But does it know the difference between right and wrong. Or right and write ✍🏽cantersaddick said:

We're finding this too. It can't do analysis. Even simple questions it can't get write even when lots has been invested in training it.cafcpolo said:

Yup! I've spent the better part of this month reworking code deployed by a data analyst that was clearly knocked up by ChatGPT. Full of bugs and slow as fuck in our data warehouse. Their solution was to spent an extra £10k a month on more resources and licenses. Maybe just learn to code instead 🙄Dazzler21 said:

What field are you in, I've also seen it used in my field, Business Analysis but the amount of mistakes makes it completely unworkable. It takes twice the time due to corrections.Leroy Ambrose said:I tell you what, we can have a good old laugh at AI, but the implications of it - in my field at least are pretty terrifying.2 -

I think in a decade or less many people are not gonna be able to make a basic decision in life day to day without first consulting with their little AI 'friend'.Leroy Ambrose said:I'll give you a personal example of the current ludicrousness around AI. A lot of this might go over peoples' heads, but you'll get the basic gist of it, which is all that's really important.

Three weeks ago, I get a frantic message from one of the development directors at work on a Friday night. he's a lovely bloke, and intelligent with it. So my natural inclination is to believe him when he tells me that one of the API integrations between his application and part of the security stack that I manage is broken. I spend about 20 minutes troubleshooting it (there are a LOT of things that *could* be wrong, so I need to check all bases). Eventually, I put it down to a client-side issue (which is 'his' side of the equation), as I've literally ruled out anything it could be. He replies back telling me it's not at his end - it's DEFINITELY on 'my' side. So, to humour him, I regenerate the access token that he's using (exact same rights as the current one) and provide it. He replies to say it still isn't working. That's when I twig (after he copies the output from his prompts) that's it's not actually HIM, I'm talking to, but Claude (the AI our developers use for pre-development work, feature design etc). I ask him to send me the prompt history - and he gets a little indignant when I say that the AI is prone to hallucinations, and VERY persuasive about them. Eventually I figure out what's wrong (the AI is asking for the wrong field when attempting to enumerate records). I tell him to change the prompt accordingly, and it, of course, now works. The AI's messages back to him the entire time have been comprehensively certain that the issue was with the 'other side' - and comprehensively wrong. The kicker? Once he changed the prompt and started receiving results, the response was:

"SUCCESS!! The access issue has been resolved, and I can now see results..."

No mention of the fact that it weas talking absolute bollocks for an hour leading up to this, or an acknowledgement that the information it had provided (gleaned from its LLM's experience of generic API calls, rather than the specific API in question) was wrong.

But here's the real crux of the issue. This is an intelligent man - he's been a software engineer for decades. Done his time on multiple iterations of products, probably been through four or five different dev stacks in his working life, getting to know all of them inside out. He's been working with APIs for the best part of ten years. He knows them better than I know them (my experience tends to be related to the security aspects of them only). Yet because Claude 'told' him that it was right, he didn't even stop to think that one of the basic things you do with an API is get the endpoint and method correct when calling it.

Now imagine that the new iteration of developers doesn't have any of the past context he's got - built up over decades. They're not learning software development from scratch, they're learning vibecoding.

We're fucked.

As probably the last generation that had a youth pre the Internet and it's related tech pervading every aspect of life i noticed it myself with Google.

25 years ago to go on holiday youd generally walk into a travel agents and give a rough outline of what you're after and budget etc and get a brochure with a few choices.

Google turned that into a much broader range of choice but along with that comes the anxt of what if i make the wrong choice and paralysis by analysis.

Fast forward to 2026 and i know people who are using chat gpt to literally analyse every aspect of their lifes...from health issies to how to approach basic conversations with colleagues and friends.

Treating AI like some kind of trusted higher power. Some experienced colleagues are incapable now of making a decision without first having had reassurance from AI which more often than not is far from perfect if at all accurate.

It's concerning how people are surrendering their own initiative and self confidence/ self belief in their own judgement and ability and not really living their lives or making their own decisions but rather being led passively by an algorithm which may or may not be accurate or correct or even have their true best interests considered.

Lots of wonderful possibilities and efficiencies potentially with this technology but at what cost to basic human being is concerning in the same way the Internet and social media for all it's wonders has left many of us yearning for a less tech intrusive time.9 -

Throwing in mistakes like that is a way to make sure that AI can't learn from what I put online.Alwaysneil said:

But does it know the difference between right and wrong. Or right and write ✍🏽cantersaddick said:

We're finding this too. It can't do analysis. Even simple questions it can't get write even when lots has been invested in training it.cafcpolo said:

Yup! I've spent the better part of this month reworking code deployed by a data analyst that was clearly knocked up by ChatGPT. Full of bugs and slow as fuck in our data warehouse. Their solution was to spent an extra £10k a month on more resources and licenses. Maybe just learn to code instead 🙄Dazzler21 said:

What field are you in, I've also seen it used in my field, Business Analysis but the amount of mistakes makes it completely unworkable. It takes twice the time due to corrections.Leroy Ambrose said:I tell you what, we can have a good old laugh at AI, but the implications of it - in my field at least are pretty terrifying.9 -

FixedChunes said:

These Palace ultras are just statistical models that calculate which word is most likely to follow another word. They don't understand anything and can't develop consciousness, any more than a large spreadsheet could.Raith_C_Chattonell said:I recently read that researchers at Anthropic were poking and prodding their latest model of Claude Sonnett when it called them out.

The thing is, if it developing a consciousness what happens next? By the time it gets to its teenage years it'll be out to fuck you up completely.

0 -

The other week I asked ChatGPT for an up and coming restaurant in south east London. We want through different ones until we settled on one in Deptford - a curry place with an up

and coming chef who’s worked in some top kitchens and loads of details. I have OpenTable linked to my ChatGPT so I asked it to find a table available there, it then took a moment and said it couldn’t find it on OpenTable, but it did say it could give me a website. So I asked for a website, “I can’t find a website for this restaurant but I have an instagram” so I started to twig. I asked for the instagram, again to no avail. So I flat out asked it “did you just make this up?” Its response? “Ah! Good catch!”. I asked it why it had made it up and it basically boiled down to “it sounded plausible so I said it”.This was just for choosing a restaurant. Imagine this level of hallucination for things that actually matter.8 -

https://x.com/anthropicai/status/2025997928242811253?s=46&t=ynww82GMl7VKBjthBflU0gAnthropic upset that Chinese labs have been using their models to create their own, much cheaper, versions.

Irony seems to be lost on them.2 -

https://www.citriniresearch.com/p/2028gic

This is hyperbole, but a lot of it is absolutely possible, and probable, even - given the lack of regulation and governance, especially in the US2 -

My biggest concern is the cost implications on other computing. Memory and Storage are getting out of hand, crippling the consumer market already. Also the financial losses from these companies are HUGE, any other company would be in ruins.2

-

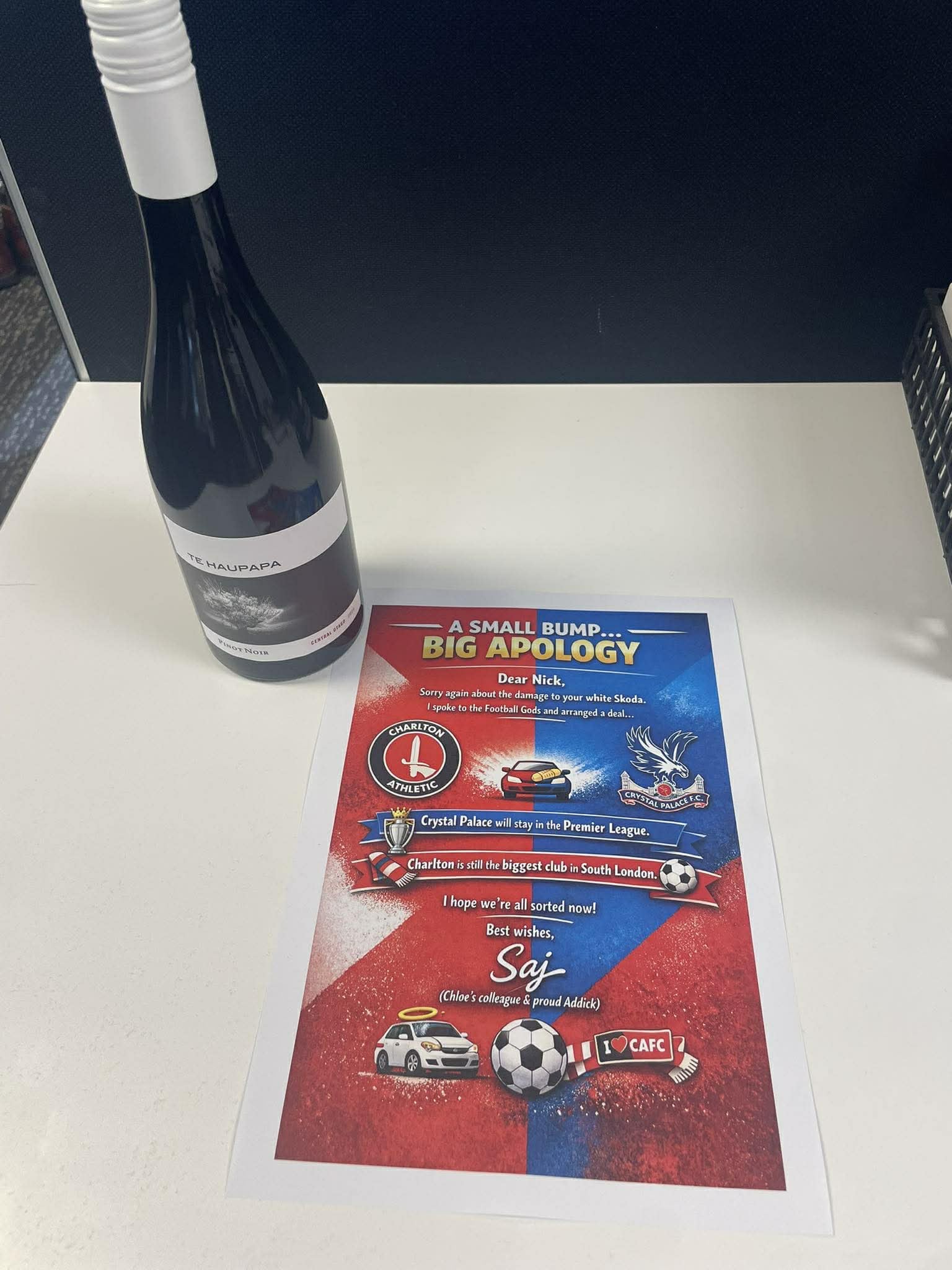

I bumped into my colleague's Dad's car (he lent it to her for the day). He happens to support Palace, so I got ChatGPT to do an 'I'm Sorry' card.

(I would obviously love them to get relegated, but I think they're fairly safe - and after all, I bumped into his car, so I better be nice).

4 -

Sponsored links:

-

That's an immediate concern, but it pales into insignificance compared to the wider economic implications imo. Just taking one example from the above piece, a huge amount of the service economy is based around people taking a fee for doing work for you - accountants, estate agents, travel agents to name just a few. When an AI agent that costs the price of a subscription to Claude can do that same job for a fraction of the cost... Doesn't take a genius to figure out that a huge number of white collar professions are within 2-3 years of being either entirely obliterated, or massively downsized. My own job is skilled and in a desirable sector - but it's not immune either - I see multiple offerings on a daily basis that threaten to make a large part of what I do obsolete within 12-18 months.Dazzler21 said:My biggest concern is the cost implications on other computing. Memory and Storage are getting out of hand, crippling the consumer market already. Also the financial losses from these companies are HUGE, any other company would be in ruins.

The complete absence of regulation of the AI giants in the US will hasten the economic catastrophe that's ahead - the current administration quite literally could not have arrived at a worse time in history.5 -

I can see all this in my own field (patents). I've been asked to trial a few different packages. A large part of the job is being able to work your way through many technical documents, summarise them, find points of similarity and difference, and then provide advice. Nothing burns through the documents like ai, and it could be a very useful tool. I've been doing this over 20 years and would know what to ask and how to interpret the results, or whether it has drafted something correctly. You can also use it for standard letters and other time saving uses. Problem is, all the dog work that is being replaced is the work of paralegals and trainees, and this is the "hard yards" needed to learn their craft. Maybe it's learning new skills I didn't need to learn (I can still use a fax machine) but the fundamentals remain the same and I'm worried that we.could be pulling up the ladder and depriving opportunities to others just to save money short term.

I've told my kids that any job that relies on human interaction is more safe, but I'm not particularly optimistic.9 -

Yeah, I made the point earlier in the thread that we're losing an entire layer of software development - and that layer is the entry point to the profession. It must be an absolutely terrifying time to be young 😕McBobbin said:I can see all this in my own field (patents). I've been asked to trial a few different packages. A large part of the job is being able to work your way through many technical documents, summarise them, find points of similarity and difference, and then provide advice. Nothing burns through the documents like ai, and it could be a very useful tool. I've been doing this over 20 years and would know what to ask and how to interpret the results, or whether it has drafted something correctly. You can also use it for standard letters and other time saving uses. Problem is, all the dog work that is being replaced is the work of paralegals and trainees, and this is the "hard yards" needed to learn their craft. Maybe it's learning new skills I didn't need to learn (I can still use a fax machine) but the fundamentals remain the same and I'm worried that we.could be pulling up the ladder and depriving opportunities to others just to save money short term.

I've told my kids that any job that relies on human interaction is more safe, but I'm not particularly optimistic.7 -

I work in international trade and find it invaluable for research and training.But … and it’s a big but … 25% of the info can be misleading if not downright wrong.Not a problem for me because I can review and update it. But those new to the business take it as gospel, reflected by the number of incorrect posts they make on LinkedIn and elsewhere pretending to be experts.Fortunately it’s fairly easy for me to detect the AI/LLM originated posts but a lot of people accept them as correct.4

-

Lawyers have been getting into trouble for citing fake cases to support their pleadings etc... never mind litigants in person, if a qualified lawyer can't be bothered to check their authorities having "written" something in a fraction of the time they deserve what they get5

-

This is massive for me, the work market is moving from a pyramid shape to a diamond shape, really really wouldn't want to be leaving uni around now. I would definitely, were I a parent of a kid in school be looking at jobs least likely to be replaced by machines (eg plumbing) and seeing that as a much safer career.Leroy Ambrose said:

Yeah, I made the point earlier in the thread that we're losing an entire layer of software development - and that layer is the entry point to the profession. It must be an absolutely terrifying time to be young 😕McBobbin said:I can see all this in my own field (patents). I've been asked to trial a few different packages. A large part of the job is being able to work your way through many technical documents, summarise them, find points of similarity and difference, and then provide advice. Nothing burns through the documents like ai, and it could be a very useful tool. I've been doing this over 20 years and would know what to ask and how to interpret the results, or whether it has drafted something correctly. You can also use it for standard letters and other time saving uses. Problem is, all the dog work that is being replaced is the work of paralegals and trainees, and this is the "hard yards" needed to learn their craft. Maybe it's learning new skills I didn't need to learn (I can still use a fax machine) but the fundamentals remain the same and I'm worried that we.could be pulling up the ladder and depriving opportunities to others just to save money short term.

I've told my kids that any job that relies on human interaction is more safe, but I'm not particularly optimistic.

4 -

Bet they still billed for the full amount of time they would have needed to properly research the case law themselves.McBobbin said:Lawyers have been getting into trouble for citing fake cases to support their pleadings etc... never mind litigants in person, if a qualified lawyer can't be bothered to check their authorities having "written" something in a fraction of the time they deserve what they get2 -

Have no doubtRizzo said:

Bet they still billed for the full amount of time they would have needed to properly research the case law themselves.McBobbin said:Lawyers have been getting into trouble for citing fake cases to support their pleadings etc... never mind litigants in person, if a qualified lawyer can't be bothered to check their authorities having "written" something in a fraction of the time they deserve what they get0 -

if the restaurent is't open yet then it doesn't have much information/data for it to go on so the response you got isn't really surprising.Diebythesword said:The other week I asked ChatGPT for an up and coming restaurant in south east London. We want through different ones until we settled on one in Deptford - a curry place with an up

and coming chef who’s worked in some top kitchens and loads of details. I have OpenTable linked to my ChatGPT so I asked it to find a table available there, it then took a moment and said it couldn’t find it on OpenTable, but it did say it could give me a website. So I asked for a website, “I can’t find a website for this restaurant but I have an instagram” so I started to twig. I asked for the instagram, again to no avail. So I flat out asked it “did you just make this up?” Its response? “Ah! Good catch!”. I asked it why it had made it up and it basically boiled down to “it sounded plausible so I said it”.This was just for choosing a restaurant. Imagine this level of hallucination for things that actually matter.0 -

I don't think the restaurant actually exists at all...Kindoncasella said:

if the restaurent is't open yet then it doesn't have much information/data for it to go on so the response you got isn't really surprising.Diebythesword said:The other week I asked ChatGPT for an up and coming restaurant in south east London. We want through different ones until we settled on one in Deptford - a curry place with an up

and coming chef who’s worked in some top kitchens and loads of details. I have OpenTable linked to my ChatGPT so I asked it to find a table available there, it then took a moment and said it couldn’t find it on OpenTable, but it did say it could give me a website. So I asked for a website, “I can’t find a website for this restaurant but I have an instagram” so I started to twig. I asked for the instagram, again to no avail. So I flat out asked it “did you just make this up?” Its response? “Ah! Good catch!”. I asked it why it had made it up and it basically boiled down to “it sounded plausible so I said it”.This was just for choosing a restaurant. Imagine this level of hallucination for things that actually matter.3 -

Sponsored links:

-

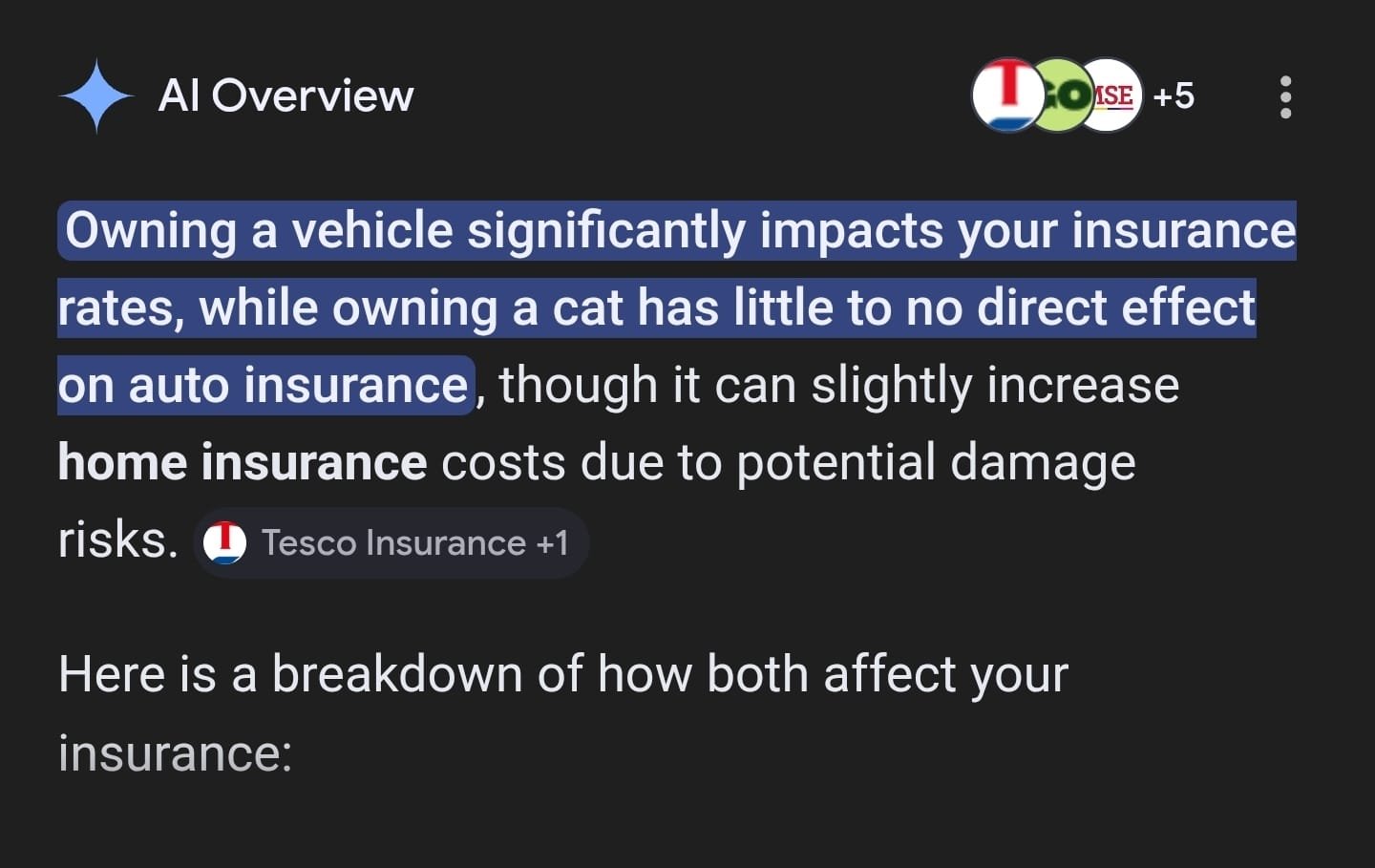

Question: Does owning a Cat N vehicle affect my insurance premium?

Answer:

15 -

-

If the pandemic taught us anything, it is that lawyers, accountants, bankers, software engineers (myself included), economists, analysts, etc, are not as much key workers as their salaries suggest.Leroy Ambrose said:https://www.citriniresearch.com/p/2028gic

This is hyperbole, but a lot of it is absolutely possible, and probable, even - given the lack of regulation and governance, especially in the US

If GenAI impacts employment in those fields, there's still plenty of important things that need work in the country, it's just that they are not highly regarded or respectable, like reducing pollution, improving social welfare, agriculture, housing, decreasing violence, etc.

3 -

OK boomerArthur_Trudgill said:

If the pandemic taught us anything, it is that lawyers, accountants, bankers, software engineers (myself included), economists, analysts, etc, are not as much key workers as their salaries suggest.Leroy Ambrose said:https://www.citriniresearch.com/p/2028gic

This is hyperbole, but a lot of it is absolutely possible, and probable, even - given the lack of regulation and governance, especially in the US

If GenAI impacts employment in those fields, there's still plenty of important things that need work in the country, it's just that they are not highly regarded or respectable, like reducing pollution, improving social welfare, agriculture, housing, decreasing violence, etc.4 -

Until everyone is a tradey and costs are driven down as a result of an oversaturated market.Huskaris said:

This is massive for me, the work market is moving from a pyramid shape to a diamond shape, really really wouldn't want to be leaving uni around now. I would definitely, were I a parent of a kid in school be looking at jobs least likely to be replaced by machines (eg plumbing) and seeing that as a much safer career.Leroy Ambrose said:

Yeah, I made the point earlier in the thread that we're losing an entire layer of software development - and that layer is the entry point to the profession. It must be an absolutely terrifying time to be young 😕McBobbin said:I can see all this in my own field (patents). I've been asked to trial a few different packages. A large part of the job is being able to work your way through many technical documents, summarise them, find points of similarity and difference, and then provide advice. Nothing burns through the documents like ai, and it could be a very useful tool. I've been doing this over 20 years and would know what to ask and how to interpret the results, or whether it has drafted something correctly. You can also use it for standard letters and other time saving uses. Problem is, all the dog work that is being replaced is the work of paralegals and trainees, and this is the "hard yards" needed to learn their craft. Maybe it's learning new skills I didn't need to learn (I can still use a fax machine) but the fundamentals remain the same and I'm worried that we.could be pulling up the ladder and depriving opportunities to others just to save money short term.

I've told my kids that any job that relies on human interaction is more safe, but I'm not particularly optimistic.0 -

Generation X actually, just.Leroy Ambrose said:

OK boomerArthur_Trudgill said:

If the pandemic taught us anything, it is that lawyers, accountants, bankers, software engineers (myself included), economists, analysts, etc, are not as much key workers as their salaries suggest.Leroy Ambrose said:https://www.citriniresearch.com/p/2028gic

This is hyperbole, but a lot of it is absolutely possible, and probable, even - given the lack of regulation and governance, especially in the US

If GenAI impacts employment in those fields, there's still plenty of important things that need work in the country, it's just that they are not highly regarded or respectable, like reducing pollution, improving social welfare, agriculture, housing, decreasing violence, etc.

Anyway, for me, you still need lawyers, accountants, software engineers, etc. But with GenAI maybe you won't need as many, or they will provide a better service or be more affordable.

But I do think that the wealth/savings generated by GenAI will not be distributed fairly, or channeled into the right problems.

2 -

Until they start deploying tradie robots (essentially what Elon musk is doing with Tesla now)Dazzler21 said:

Until everyone is a tradey and costs are driven down as a result of an oversaturated market.Huskaris said:

This is massive for me, the work market is moving from a pyramid shape to a diamond shape, really really wouldn't want to be leaving uni around now. I would definitely, were I a parent of a kid in school be looking at jobs least likely to be replaced by machines (eg plumbing) and seeing that as a much safer career.Leroy Ambrose said:

Yeah, I made the point earlier in the thread that we're losing an entire layer of software development - and that layer is the entry point to the profession. It must be an absolutely terrifying time to be young 😕McBobbin said:I can see all this in my own field (patents). I've been asked to trial a few different packages. A large part of the job is being able to work your way through many technical documents, summarise them, find points of similarity and difference, and then provide advice. Nothing burns through the documents like ai, and it could be a very useful tool. I've been doing this over 20 years and would know what to ask and how to interpret the results, or whether it has drafted something correctly. You can also use it for standard letters and other time saving uses. Problem is, all the dog work that is being replaced is the work of paralegals and trainees, and this is the "hard yards" needed to learn their craft. Maybe it's learning new skills I didn't need to learn (I can still use a fax machine) but the fundamentals remain the same and I'm worried that we.could be pulling up the ladder and depriving opportunities to others just to save money short term.

I've told my kids that any job that relies on human interaction is more safe, but I'm not particularly optimistic.0 -

Tesla is as far away from developing tradie robots as any small child with a robotics set is. The con job that Lyle Langley has pulled on the general public is absolutely unbelievable.Diebythesword said:

Until they start deploying tradie robots (essentially what Elon musk is doing with Tesla now)Dazzler21 said:

Until everyone is a tradey and costs are driven down as a result of an oversaturated market.Huskaris said:

This is massive for me, the work market is moving from a pyramid shape to a diamond shape, really really wouldn't want to be leaving uni around now. I would definitely, were I a parent of a kid in school be looking at jobs least likely to be replaced by machines (eg plumbing) and seeing that as a much safer career.Leroy Ambrose said:

Yeah, I made the point earlier in the thread that we're losing an entire layer of software development - and that layer is the entry point to the profession. It must be an absolutely terrifying time to be young 😕McBobbin said:I can see all this in my own field (patents). I've been asked to trial a few different packages. A large part of the job is being able to work your way through many technical documents, summarise them, find points of similarity and difference, and then provide advice. Nothing burns through the documents like ai, and it could be a very useful tool. I've been doing this over 20 years and would know what to ask and how to interpret the results, or whether it has drafted something correctly. You can also use it for standard letters and other time saving uses. Problem is, all the dog work that is being replaced is the work of paralegals and trainees, and this is the "hard yards" needed to learn their craft. Maybe it's learning new skills I didn't need to learn (I can still use a fax machine) but the fundamentals remain the same and I'm worried that we.could be pulling up the ladder and depriving opportunities to others just to save money short term.

I've told my kids that any job that relies on human interaction is more safe, but I'm not particularly optimistic.1 -

For those interested. There is a bit of a movement to Quit ChatGPT due to open AI links with ICE and mass surveillance as well as automated weapons contracts. If you are able worth moving to Claude or another AI platform. Will be important to send a message to Silicon Valley that they need to be careful how they use this tech going forwards.

https://www.theguardian.com/commentisfree/2026/mar/04/quit-chatgpt-subscription-boycott-silicon-valley3 -

It won't make a jot of difference. They're literally all bending the knee to Trump. They can't survive without it.0